by Robyn Bolton | Mar 25, 2026 | AI, Leadership, Strategy

“AI is the new cigarette.”

When a colleague said this in the waning days of 2022, days after ChatGPT burst on the scene, she took my breath away. The idea that this miracle would kill us seemed confined to hysterical handwringing foretelling the birth of Skynet.

She was right.

But neither of us knew it was designed to be that way.

Designed for addiction

My friend predicted that ChatGPT would stay free and helpful until usage reached “critical mass,” and then we’d have to pay. Less than three months after its November launch, OpenAI introduced its $20 per month service.

But it’s not the “first one’s free, the next one will cost you” aspect of drugs that makes AI addictive. It’s the design decisions at its core that keeps you coming back:

- Purchase Decoupling in which you convert real money into tokens, creating psychological distance between you and your actual spending

- Difficulty Curve where skills and benefits accumulate quickly giving you the sense that you’re becoming more capable over time and therefore more committed after progress slows.

- Skill Atrophy where every skill you stop practicing because the machine does it for you, quietly disappears.

Even casual AI users have experienced one or more of these:

- You get a message mid-chat telling you you’ve used all your tokens and need to come back in three hours even though you’ve paid your monthly $20 fee

- You’re prompting in all caps because it’s the only way you can think of to get the LLM to stop hallucinating, while reminiscing about the days when it was a brilliant thought-partner

- You’ve relied on AI to outline articles for the last several months, but you need to write in a different style and have no idea how to get started.

And yet, we keep going back.

But it’s not just individuals who are addicted. It’s entire organizations.

Signs that your organization is addicted to AI

Your CFO asks for the total AI spend across the organization. Three weeks and four departments later, the number is three times what anyone expected because the licenses are buried in IT infrastructure budgets, the pilots are expensed as innovation projects, and half the tools were purchased by business units on corporate cards.

The board approved the AI transformation initiative based on the pilot results. Eighteen months later, the pilot case study slide hasn’t changed, headcount has been reduced in anticipation of productivity gains that haven’t materialized, and the team running the pilot has quietly moved on to other work.

You eliminated the analyst pool two years ago because AI could do in minutes what they did in days. Now you need to evaluate whether the AI’s output is actually correct, and you’ve just realized there’s nobody left in the organization to check it because everyone who’s done it is gone.

Sound familiar? Your organization is an addict.

Recovery is possible

Addiction can’t be cured, only managed. The same is true for AI.

The road to recovery starts in a similar place: Visibility

- Centralize AI spending the way you centralize other business processes AND allow some flexibility by setting strict spending limits and clear decision-making criteria and ownership.

- Start pilots with the end in mind by establishing success metrics and scaling plans at the start of the pilot, not when it’s already in process.

- Treat certain human capabilities as strategic reserves the same way you’d treat any critical operational dependency. Before automating a function, explicitly document what judgment and expertise currently lives there, who holds it, and what it would cost to rebuild it if needed.

Unlike cigarettes or gambling, we’ve reached a point where we can’t quit AI.

But we can be aware of our addiction and we must manage it.

The first step is admitting that it’s real. And by design.

by Robyn Bolton | Mar 18, 2026 | AI, Customer Centricity, Leadership, Leading Through Uncertainty, Strategy

If you’re uncertain, you’re not alone. According to data from FactSet, 87% of Fortune 500 companies cited “uncertainty” during their 2025 Q1 earnings calls. And while things are definitely a tad chaotic in the world, I’ve started asking my clients, “What would you do if you were certain?”

It’s not an academic thought experiment. It’s a very practical exercise that radically shifts the way the think about and lead their businesses.

An Example That Proves the Rule

Most leaders facing disruption do one of two things: freeze and hope that “this too shall pass” or follow and hope that there is safety in numbers.

Neither is a strategy. Both are knee jerk reactions rooted in fear and communicated in the language and buzzwords of business.

This behavior didn’t start with AI. It happens every time a disruptive technology or philosophy bursts onto the scene. The printing press. The industrial revolution. Microchips. Each time, a new leader and paradigm emerges. How do they do it?

They’re certain.

Not because they’re omniscient. But because they know the answers to three questions

Question 1: Who Are You?

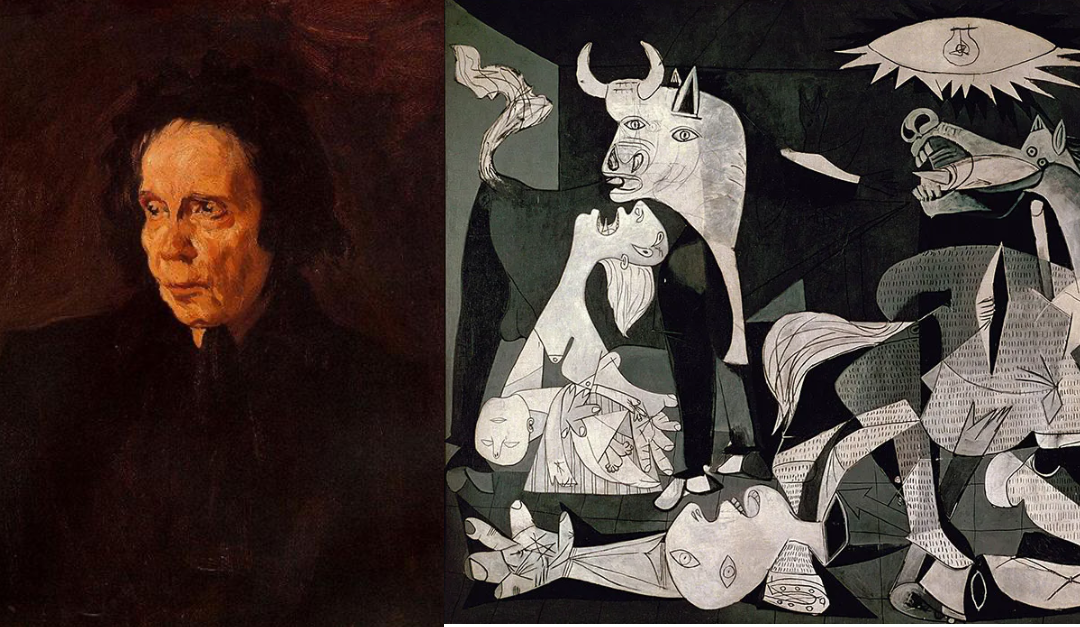

When photography made academic realism obsolete, Picasso didn’t freeze. He didn’t pick up a camera. He created something entirely new. Why? Because he knew exactly who he was. “I don’t seek,” he said. “I find.”

Today’s business icons are no different. Richard Branson describes himself as curious and someone who challenges the status quo. Lou Gerstner, when he arrived at a floundering IBM, declared himself a results man, not a visionary.

These self-definitions aren’t marketing. They’re decisions filters that define what you are and aren’t willing to do, agnostic of events, technologies, and capabilities.

Question 2: What Does Your Organization Actually Do?

Not what you make. Not what you sell. What Job to be Done do customers hire you to do?

Nintendo’s answer has been consistent across 130 years of radical product change: help me have fun with friends and family. From playing cards to the Game Boy, Wii, and Switch, their products changed completely. The Job didn’t.

IBM has done the same. From punch card tabulators to consulting and AI, the Job of helping customers make sense of complex information to run better never change. Amex moved from freight forwarding to credit and debit cards, but it’s commitment to move value securely when direct exchange isn’t an option never wavered.

When you know the Job you do, you stop chasing trends and start making choices.

Question 3: How Do You Move Forward?

You can’t answer this question without answering the first two. When you try, you get caught in the same freeze/follow trap as everyone else.

But when you answer the first two questions, the answer to this one becomes clear. For Picasso and Branson, they create. For Gerstner, he optimized the status quo. For most businesses, the answer is “And, not Or.” They must stabilize today’s business, step into (even follow) the next wave, and invest in creating the new.

Satya Nadella’s transformation of Microsoft is a perfect example. He defined himself as a learner, not a knower. He defined Microsoft’s job as helping people make a difference in their roles. From those two answers, every major move followed logically: maintain Office 365, step into cloud, create quantum computing technology.

None of it was reactive. All of it felt certain.

Your Moment Is Now

Yes, the world is uncertain. You don’t have to be.

Before you close this tab and tell yourself you’ll think about it later, answer the first two questions. You can change your answers later, but you need to start now.

The leaders who navigate this moment won’t be the ones who wait and see or follow the crowd. They’ll be the ones who know themselves and their organizations well enough to be certain.

by Robyn Bolton | Mar 11, 2026 | AI, Leading Through Uncertainty, Strategy

Thursday, February 26.

3:35 pm PST – Jack Dorsey said thank you and goodbye to 4,000 people. Block;s profitability was growing, but the promise of “intelligence tools…paired with flatted teams” enabled a fundamental shift in how the company could be run

4:12 pm PST – He posted his farewell announcement to X for the world to read. In it he wrote, “I know doing it this way might feel awkward. I’d rather feel awkward and human than efficient and cold.”

Is there anything more darkly humorous than a CEO trying to avoid appearing efficient and cold when communicating a decision to make the company more efficient and cold?

Only the moment when your boss calls to ask how your plans to grow the business and going and then informs you that the C-Suite wants a plan “to do what Dorsey just did”

Tuesday, March 10.

Time unknown – The agenda of Amazon’s weekly “This Week in Stores Tech” focused solely on investigating why “the availability of the site and related infrastructure has not been good recently.”

More specifically, why, for SIX HOURS, Amazon customers could not access their accounts, view product prices, or complete checkout. That is nearly $300M in lost revenue assuming the outage only affected North America.

All because, after years of cutting headcount and ramping up AI, junior engineers basically vibe-coded production changes..

As best practices and safeguards are yet to be “concretized,” it’s now the responsibility of senior engineers to review all production changes prepared by junior programmers.

How efficient is that AI looking now?

What we lose when we bet on hype, not proof

Researchers at Oxford have documented companies using AI as justification for cuts they had already planned. A January 2026 survey of 1,006 global executives found that 60% have or will make cuts in anticipation of AI’s impact while 29% plan to slow hiring. Only 2% have laid off staff as a result for actual AI-driven results.

Thousands of people are being laid off based on hype, not proof.

It’s reasonable to expect that, one day, AI will live up to the hype and deliver on all the promises promoters are making. But that’s a long-term bet that only pays out if you survive the inevitable crashes in efficiency, revenue, and institutional knowledge.

When organizations swap out people for “intelligence tools,” they lose institutional memory, the subtle, often unspoken, sometimes subconscious knowledge that makes things work. These are the people who understand your clients, your controls, and why past decisions were made. AI can automate workflows. It cannot replicate that knowledge. And once it’s gone, it’s gone.

And the loss continues even amongst the people who remain.

Research from MIT shows that regular AI use reduces activity in brain networks responsible for creativity and analogical thinking by 55%, and the atrophy persists even after people stop using AI tools. You are not trading people for AI. You are trading people for AI while simultaneously reducing your remaining team’s capacity to think creatively, adapt quickly, and catch mistakes. Operations get fragile. Innovation stalls. And when the AI-assisted work fails, as it did at Amazon, there’s no one left to fix it.

The root of growth is never hype

When the call comes down from on high to “do what Dorsey did” it’s hard to counter with cautionary tales like Amazon or reality checks about the state and capability of the organization.

But you can ask questions:

- Are you cutting based on what AI has delivered or what we expect it to?

- How will we ensure essential institutional knowledge isn’t lost?

- If (when) AI-assisted work fails, who fixes it? Amazon’s answers were still on staff. Will ours be, too?

Growth is essential to every organization. But you can’t cut your way to growth.

AI doesn’t change that fact.

It just makes it easier to believe the hype.

by Robyn Bolton | Feb 8, 2026 | AI, Leadership, Leading Through Uncertainty, Strategic Foresight, Strategy

In 2023, Klarna’s CEO proudly announced it had replaced 700 customer service workers with AI and that the chatbot was handling two-thirds of customer queries. Labor costs dropped and victory was declared.

By 2025, Klarna was rehiring. Customer satisfaction had tanked. The CEO admitted they “went too far,” focusing on efficiency over quality.

Like Captain Robert Scott, Klarna misjudged the circumstance it was in, applied the wrong playbook, and lost. It thought it had facts but all it has was technical specs. It made tons of assumptions about chatbots’ ability to replace human judgment and how customers would respond.

Calibrated Decision Design, a process for diagnosing your circumstances before picking a playbook, consistently proves to be a quick and necessary step to ensure success.

When you have the facts and need results ASAP: Go NOW!

General Mills, like its competitors, had been digitizing its supply chain for years and so facts based on experience and a list of the facts it needed.

To close the gap and achieve end-to-end visibility in its supply chain, it worked with Palantir to develop a digital twin of its entire supply chain. Results: 30% waste reduction, $300 million in savings, decisions that took weeks now takes hours. It proves that you don’t need all the answers to make a move, but you need to know more than you don’t.

When you have hypotheses but can’t wait for results: Discovery Planning

Morgan Stanley Wealth Management’s (MSWM) clients expect advisors to bring them bespoke advice based on mountains of analysis, and insights. But it’s impossible for any advisor to process all that data. Confident that AI could help but uncertain whether its would improve relationships or create friction, MSWM partnered with OpenAI.

Within six months, they debuted a GenAI chatbot to help Financial Advisors quickly access the firm’s IP. Document retrieval jumped from 20% to 80% and 98% now use it daily. Two years later, MSWM expanded into a meeting summary tool to summarize meetings into actionable outputs and update the CRM with notes and follow-ups. A perfect example of how a series of experiments leads to a series of successes.

When you have facts and time to achieve results: Patient Planning

Drug discovery requires patience and, while the process may be predictable, the results aren’t. That’s why pharma companies need strategies that are thoughtfully planned as they are responsive.

Lilly is doing just that by investing in its own capabilities and building an ecosystem of partners. It started by launching TuneLab, a platform offering access to AI-enabled drug discovery models based on data that Lilly spent over $1 billion developing. A month later, the pharma giant announced a partnership with NVIDIA to build the pharmaceutical industry’s most powerful AI supercomputer. Two months later, it committed over $6 billion to a new manufacturing facility in Alabama. These aren’t billion-dollar bets, they’re thoughtful investments in a long-term future that allows Lilly to learn now and stay flexible as needs and technology evolve.

When you’re making assumptions and have time to learn: Resilient Strategy

There’s no way of knowing what the global energy system will look like in 40 years. That’s why Shell’s latest scenario planning efforts resulted in three distinct scenarios, Surge, Archipelagos, and Horizon. Multiple scenarios allows the company to “explore trade-offs between energy security, economic growth and addressing carbon emissions” and build resilient strategies to recognize which one is unfolding and pivot before competitors even spot what’s happening.

Stop benchmarking. Start diagnosing.

It’s easy to feel like you’re behind when it comes to AI. But the rush to act before you know the problem and the circumstances is far more likely to make you a cautionary tale than a poster child for success.

So, stop benchmarking what competitors do and start diagnosing the circumstances you’re in, so you use the playbook you need.

by Robyn Bolton | Feb 2, 2026 | AI, Leadership, Strategic Foresight, Strategy

It was a race. And the whole world was watching.

In 1911, Captain Robert Scott set out to reach the South Pole. He’d been to Antarctica before and because of his past success, he had more funding, more expertise, and more experience. He had all the equipment needed.

Racing him to fame, fortune and glory was Norwegian Roald Amundsen. Originally heading to the North Pole, he turned around when he learned that Robert Peary had beaten him there. He had dogs and skis, equipment perfect for the Arctic but unproven in Antarctica.

Amundsen won the race, by over a month.

Scott and his crew died 11 miles from the South Pole.

When the Playbook Stops Working

Scott wasn’t guessing. He’d tested motor sledges in the Alps. He’d seen ponies work on a previous Antarctic expedition. He built a plan around the best available equipment and the general playbook that had served British expeditions for decades: horses and motors move heavy loads, so use horses and motors.

It just wasn’t right for Antarctica. The motors broke down in the cold. The ponies sank through the ice. The plan that looked solid on paper fell apart the moment it met the actual environment it had to operate in.

The same thing is happening today with AI.

For decades, when new technologies emerge, executives have followed a similarly familiar playbook: assess the opportunity, build a business case, plan the rollout, execute.

And for decades it worked. Cloud migrations and ERP implementations were architectural changes to known processes with predictable outcomes. As time went on, information grew more solid, timelines became better understood, and the playbook solidified.

AI is different. Executives are so focused on picking the right AI tools and building the right infrastructure that they aren’t thinking about what happens when they hit the ice. Even if the technology works as designed, you have no idea whether it will deliver the intended results or create a ripple of unintended consequences that paralyze your business and put egg on your face.

Diagnose Before You Prescribe

The circumstances of AI are different too, and that requires a new playbook. Make that playbooks. Picking the right playbook requires something my clients and I call Calibrated Decision Design.

We start by asking how long it will take to realize the ultimate goals of the investment. Do we need to break even this year, or is this a multi-year bet where results slowly roll in? Most teams have a sense of this, so it allows us to move quickly to the next, much harder question.

What do we know and what do we believe? This is where most teams and AI implementations fail. To seem confident and indispensable, people present hypotheses as if they are facts resulting in decisions based on a single data points or best guesses. The result is a confident decision destined to crumble.

Where you land on these two axes determines your playbook. Apply the wrong one and you’ll either waste money on over-analysis or burn through budget on premature action.

Pick from the Four Playbooks

Go NOW!: You have the facts and need results now. Stop deliberating. Execute.

Predictable Planning: You have confidence in the outcome, but the payoff takes patience. Build a flexible strategy and operational plan to stay responsive as things progress.

Discovery Planning: You need results fast, but you don’t have proof your plan will work. Run small, fast experiments before scaling anything.

Resilient Strategy: The time horizon is long and you’re short on facts. The worst thing you can do is go all in. Instead, envision multiple futures, identify early warning signs, find commonalities and prepare a strategy that can pivot.

Apply it

Which playbook are you using and which one is best for your circumstance?

by Robyn Bolton | Jan 25, 2026 | AI, Leadership, Leading Through Uncertainty

Spain, 1896

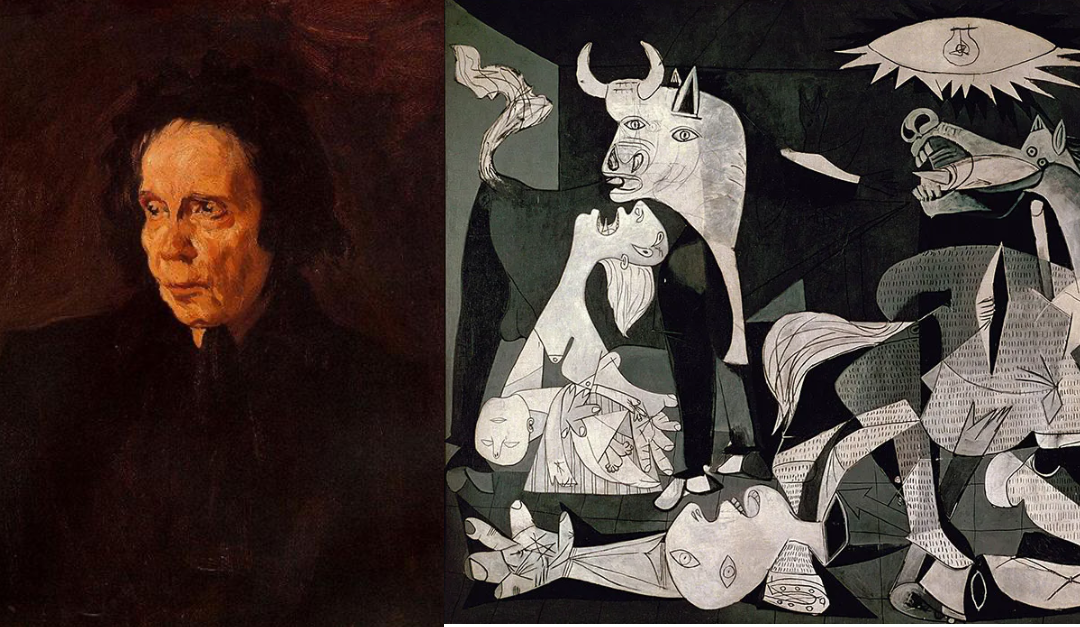

At the tender age of 14, Pablo Ruiz Picasso painted a portrait of his Aunt Pepa a work of brilliant academic realism that would go on to be hailed as “without a doubt one of the greatest in the whole history of Spanish painting.”

In 1901, he abandoned his mastery of realism, painting only in shades blue and blue-green.

There’s debate over why Picasso’s Blue Period began. Some argue that it’s a reflection of the poverty and desperation he experienced as a starving artist in Paris. Others claim it was a response to the suicide of his friend, Carles Casagemas. But Bill Gurley, a longtime venture capitalist, has a different theory.

Picasso abandoned realism because of the Kodak Brownie.

Introduced on February 1, 1900, the Kodak Brownie made photography widely available, fulfilling George Eastman’s promise that “you press the button, we do the rest.”

An ocean away, Gurley argues, Picasso’s “move toward abstraction wasn’t a rejection of skill; it was a recognition that realism had stopped being the frontier….So Picasso moved on, not because realism was wrong, but because it was finished.”

Washington DC, 2004

Three years before Drive took the world by storm, Daniel Pink published his third book, A Whole New Mind: Why Right-Brainers Will Rule the Future.

In it, he argues that a combination of technological advancements, higher standards of living, and access to cheaper labor are pushing us from a world that values left brain skills like linear thought, analysis, and optimization towards one that requires right brain skills like artistry, empathy, and big picture thinking.

As a result, those who succeed in the future will be able to think like designers, tell stories with context and emotional impact, and combine disparate pieces into a whole greater than the sum of its parts. Leaders will need to be empathetic, able to create “a pathway to more intense creativity and inspiration,” and guide others in the pursuit of meaning and significance.

California, 2026

Barry O’Reilly, author of Unlearn, published his monthly blog post, “Six Counterintuitive Trends to Think about for 2026,” in which he outlines what he believes will be the human reactions to a world in which AI is everywhere.

Leadership, he asserts, will cease to be measured by the resources we control (and how well we control them to extract maximum value) but by judgment. Specifically, a leader’s ability to:

- Ask better questions

- Frame decisions clearly

- Hold ambiguity without freezing

- Know when not to use AI

The Price of Safety vs the Promise of Greatness

Picasso walked away from a thriving and lucrative market where he was an emerging star to suffer the poverty, uncertainty, and desperation of finding what was next. It would take more than a decade for him to find international acclaim. He would spend the rest of his life as the most famous and financially successful artist in the world.

Are you willing to take that same risk?

You can cling to the safety of what you know, the markets, industries, business models, structures, incentives that have always worked. You can continue to demand immediate efficiency, obedience, and profit while experimenting with new tech and playing with creative ideas.

Or you can start to build what’s next. You don’t have to abandon what works, just as Picasso didn’t abandon paint. But you do have to start using your resources in new ways. You must build the characteristics and capabilities that Daniel Pink outlines. You must become the “counterintuitive” leader that embraces ambiguity, role models critical thinking, and rewards creativity and risk-taking.

Do you have the courage to be counterintuitive?

Are you willing to embrace your inner Picasso?